LU decomposition

In linear algebra, LU decomposition (also called LU factorization) is a matrix decomposition which writes a matrix as the product of a lower triangular matrix and an upper triangular matrix. The product sometimes includes a permutation matrix as well. This decomposition is used in numerical analysis to solve systems of linear equations or calculate the determinant of a matrix. LU decomposition can be viewed as a matrix form of Gaussian elimination. LU decomposition was introduced by mathematician Alan Turing [1]

Contents |

Definitions

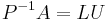

Let A be a square matrix. An LU decomposition is a decomposition of the form

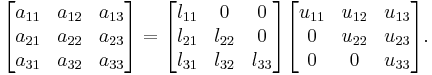

where L and U are lower and upper triangular matrices (of the same size), respectively. This means that L has only zeros above the diagonal and U has only zeros below the diagonal. For a  matrix, this becomes:

matrix, this becomes:

An LDU decomposition is a decomposition of the form

where D is a diagonal matrix and L and U are unit triangular matrices, meaning that all the entries on the diagonals of L and U are one.

An LUP decomposition (also called a LU decomposition with partial pivoting) is a decomposition of the form

where L and U are again lower and upper triangular matrices and P is a permutation matrix, i.e., a matrix of zeros and ones that has exactly one entry 1 in each row and column.

An LU decomposition with full pivoting (Trefethen and Bau) takes the form

Above we required that A be a square matrix, but these decompositions can all be generalized to rectangular matrices as well. In that case, L and P are square matrices which each have the same number of rows as A, while U is exactly the same shape as A. Upper triangular should be interpreted as having only zero entries below the main diagonal, which starts at the upper left corner.

Existence and uniqueness

An invertible matrix admits an LU factorization if and only if all its leading principal minors are non-zero. The factorization is unique if we require that the diagonal of L (or U) consist of ones. The matrix has a unique LDU factorization under the same conditions.

If the matrix is singular, then an LU factorization may still exist. In fact, a square matrix of rank k has an LU factorization if the first k leading principal minors are non-zero, although the converse is not true.

The exact necessary and sufficient conditions under which a not necessarily invertible matrix over any field has an LU factorization are known. The conditions are expressed in terms of the ranks of certain submatrices. The Gaussian elimination algorithm for obtaining LU decomposition has also been extended to this most general case (Okunev & Johnson 1997).

Every invertible matrix A admits an LUP factorization.

Positive definite matrices

If the matrix A is Hermitian and positive definite, then we can arrange matters so that U is the conjugate transpose of L. In this case, we have written A as

This decomposition is called the Cholesky decomposition. The Cholesky decomposition always exists and is unique. Furthermore, computing the Cholesky decomposition is more efficient and numerically more stable than computing some other LU decompositions.

Explicit formulation

When an LDU factorization exists and is unique there is a closed (explicit) formula for the elements of L, D, and U in terms of ratios of determinants of certain submatrices of the original matrix A (Householder 1975). In particular,  and for

and for  ,

,  is the ratio of the

is the ratio of the  principal submatrix to the

principal submatrix to the  principal submatrix.

principal submatrix.

Algorithms

The LU decomposition is basically a modified form of Gaussian elimination. We transform the matrix A into an upper triangular matrix U by eliminating the entries below the main diagonal. The Doolittle algorithm does the elimination column by column starting from the left, by multiplying A to the left with atomic lower triangular matrices. It results in a unit lower triangular matrix and an upper triangular matrix. The Crout algorithm is slightly different and constructs a lower triangular matrix and a unit upper triangular matrix.

Computing the LU decomposition using either of these algorithms requires 2n3 / 3 floating point operations, ignoring lower order terms. Partial pivoting adds only a quadratic term; this is not the case for full pivoting.[2]

Doolittle algorithm

Given an N × N matrix

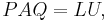

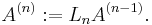

we define

and then we iterate n = 1,...,N-1 as follows.

We eliminate the matrix elements below the main diagonal in the n-th column of A(n-1) by adding to the i-th row of this matrix the n-th row multiplied by

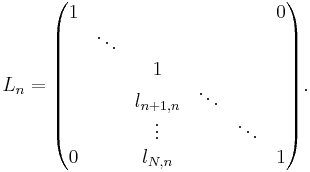

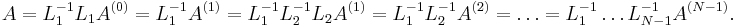

for  . This can be done by multiplying A(n-1) to the left with the lower triangular matrix

. This can be done by multiplying A(n-1) to the left with the lower triangular matrix

We set

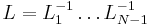

After N-1 steps, we eliminated all the matrix elements below the main diagonal, so we obtain an upper triangular matrix A(N-1). We find the decomposition

Denote the upper triangular matrix A(N-1) by U, and  . Because the inverse of a lower triangular matrix Ln is again a lower triangular matrix, and the multiplication of two lower triangular matrices is again a lower triangular matrix, it follows that L is a lower triangular matrix. Moreover, it can be seen that

. Because the inverse of a lower triangular matrix Ln is again a lower triangular matrix, and the multiplication of two lower triangular matrices is again a lower triangular matrix, it follows that L is a lower triangular matrix. Moreover, it can be seen that

We obtain  .

.

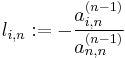

It is clear that in order for this algorithm to work, one needs to have  at each step (see the definition of

at each step (see the definition of  ). If this assumption fails at some point, one needs to interchange n-th row with another row below it before continuing. This is why the LU decomposition in general looks like

). If this assumption fails at some point, one needs to interchange n-th row with another row below it before continuing. This is why the LU decomposition in general looks like  .

.

Crout and LUP algorithms

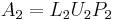

The LUP decomposition algorithm by Cormen et al. generalizes Crout matrix decomposition. It can be described as follows.

- If

has a nonzero entry in its first row, then take a permutation matrix

has a nonzero entry in its first row, then take a permutation matrix  such that

such that  has a nonzero entry in its upper left corner. Otherwise, take for

has a nonzero entry in its upper left corner. Otherwise, take for  the identity matrix. Let

the identity matrix. Let  .

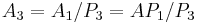

. - Let

be the matrix that one gets from

be the matrix that one gets from  by deleting both the first row and the first column. Decompose

by deleting both the first row and the first column. Decompose  recursively. Make

recursively. Make  from

from  by first adding a zero row above and then adding the first column of

by first adding a zero row above and then adding the first column of  at the left.

at the left. - Make

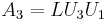

from

from  by first adding a zero row above and a zero column at the left and then replacing the upper left entry (which is 0 at this point) by 1. Make

by first adding a zero row above and a zero column at the left and then replacing the upper left entry (which is 0 at this point) by 1. Make  from

from  in a similar manner and define

in a similar manner and define  . Let

. Let  be the inverse of

be the inverse of  .

. - At this point,

is the same as

is the same as  , except (possibly) at the first row. If the first row of

, except (possibly) at the first row. If the first row of  is zero, then

is zero, then  , since both have first row zero, and

, since both have first row zero, and  follows, as desired. Otherwise,

follows, as desired. Otherwise,  and

and  have the same nonzero entry in the upper left corner, and

have the same nonzero entry in the upper left corner, and  for some upper triangular square matrix

for some upper triangular square matrix  with ones on the diagonal (

with ones on the diagonal ( clears entries of

clears entries of  and adds entries of

and adds entries of  by way of the upper left corner). Now

by way of the upper left corner). Now  is a decomposition of the desired form.

is a decomposition of the desired form.

Theoretical complexity

If two matrices of order n can be multiplied in time M(n), where M(n)≥na for some a>2, then the LU decomposition can be computed in time O(M(n)).[3] This means, for example, that an O(n2.376) algorithm exists based on the Coppersmith–Winograd algorithm.

Small example

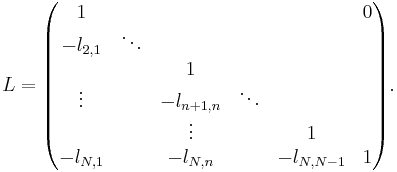

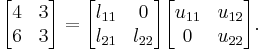

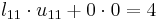

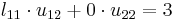

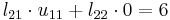

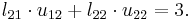

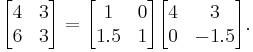

One way of finding the LU decomposition of this simple matrix would be to simply solve the linear equations by inspection. You know that:

Such a system of equations is underdetermined. In this case any two non-zero elements of L and U matrices are parameters of the solution and can be set arbitrarily to any non-zero value. Therefore to find the unique LU decomposition, it is necessary to put some restriction on L and U matrices. For example, we can require the lower triangular matrix L to be a unit one (i.e. set all the entries of its main diagonal to ones). Then the system of equations has the following solution:

Substituting these values into the LU decomposition above:

Sparse matrix decomposition

Special algorithms have been developed for factorizing large sparse matrices. These algorithms attempt to find sparse factors L and U. Ideally, the cost of computation is determined by the number of nonzero entries, rather than by the size of the matrix.

These algorithms use the freedom to exchange rows and columns to minimize fill-in (entries which change from an initial zero to a non-zero value during the execution of an algorithm).

General treatment of orderings that minimize fill-in can be addressed using graph theory.

Applications

Solving linear equations

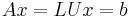

Given a matrix equation

we want to solve the equation for x given A and b. In this case the solution is done in two logical steps:

- First, we solve the equation

for y

for y - Second, we solve the equation

for x.

for x.

Note that in both cases we have triangular matrices (lower and upper) which can be solved directly using forward and backward substitution without using the Gaussian elimination process (however we need this process or equivalent to compute the LU decomposition itself). Thus the LU decomposition is computationally efficient only when we have to solve a matrix equation multiple times for different b; it is faster in this case to do an LU decomposition of the matrix A once and then solve the triangular matrices for the different b, than to use Gaussian elimination each time.

Inverse matrix

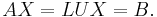

When solving systems of equations, b is usually treated as a vector with a length equal to the height of matrix A. Instead of vector b, we have matrix B, where B is an n-by-p matrix, so that we are trying to find a matrix X (also a n-by-p matrix):

We can use the same algorithm presented earlier to solve for each column of matrix X. Now suppose that B is the identity matrix of size n. It would follow that the result X must be the inverse of A.[4]

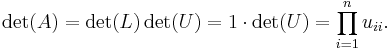

Determinant

The matrices  and

and  can be used to compute the determinant of the matrix

can be used to compute the determinant of the matrix  very quickly, because det(A) = det(L) det(U) and the determinant of a triangular matrix is simply the product of its diagonal entries. In particular, if L is a unit triangular matrix, then

very quickly, because det(A) = det(L) det(U) and the determinant of a triangular matrix is simply the product of its diagonal entries. In particular, if L is a unit triangular matrix, then

The same approach can be used for LUP decompositions. The determinant of the permutation matrix P is (−1)S, where  is the number of row exchanges in the decomposition.

is the number of row exchanges in the decomposition.

See also

References

- ^ Poole, David (2006), Linear Algebra: A Modern Introduction (2nd ed.), Canada: Thomson Brooks/Cole, ISBN 0-534-99845-3.

- ^ Golub, Gene H.; Van Loan, Charles F. (1996), Matrix Computations (3rd ed.), Baltimore: Johns Hopkins, ISBN 978-0-8018-5414-9.

- ^ J.R. Bunch and J.E. Hopcroft, Triangular factorization and inversion by fast matrix multiplication, Mathematics of Computation, 28 (1974) 231–236.

- ^ Matrix Computations. 3rd Edition, 1996. p121.

- Bau III, David; Trefethen, Lloyd N. (1997), Numerical linear algebra, Philadelphia: Society for Industrial and Applied Mathematics, ISBN 978-0-89871-361-9

- Cormen, Thomas H.; Leiserson, Charles E.; Rivest, Ronald L.; Stein, Clifford (2001), Introduction to Algorithms, MIT Press and McGraw-Hill, ISBN 978-0-262-03293-3

- Horn, Roger A.; Johnson, Charles R. (1985), Matrix Analysis, Cambridge University Press, ISBN 0-521-38632-2. See Section 3.5.

- Householder, Alston (1975), The Theory of Matrices in Numerical Analysis.

- Okunev, Pavel; Johnson, Charles (1997), Necessary And Sufficient Conditions For Existence of the LU Factorization of an Arbitrary Matrix, arXiv:math.NA/0506382.

- Press, WH; Teukolsky, SA; Vetterling, WT; Flannery, BP (2007), "Section 2.3", Numerical Recipes: The Art of Scientific Computing (3rd ed.), New York: Cambridge University Press, ISBN 978-0-521-88068-8, http://apps.nrbook.com/empanel/index.html?pg=48

External links

References

- LU decomposition on MathWorld.

- LU decomposition on Math-Linux.

- Module for LU Factorization with Pivoting, Prof. J. H. Mathews, California State University, Fullerton

- LU decomposition at Holistic Numerical Methods Institute

Computer code

- LAPACK is a collection of FORTRAN subroutines for solving dense linear algebra problems

- ALGLIB includes a partial port of the LAPACK to C++, C#, Delphi, etc.

- C++ code, Prof. J. Loomis, University of Dayton

- C code, Mathematics Source Library

- Pseudo-code: Gaussian elimination approach, Prof. P. Wapperom, Virginia Tech

- Pseudo-code: Doolittle algorithm, Prof. P. Wapperom, Virginia Tech

- LU in X10

Online resources

- WebApp descriptively solving systems of linear equations with LU Decomposition

- Matrix Calculator, bluebit.gr

- LU Decomposition Tool, uni-bonn.de

- LU Decomposition by Ed Pegg, Jr., The Wolfram Demonstrations Project, 2007.

|

||||||||||||||